R1 Distill Llama 8b Api Providers Stats Openrouter

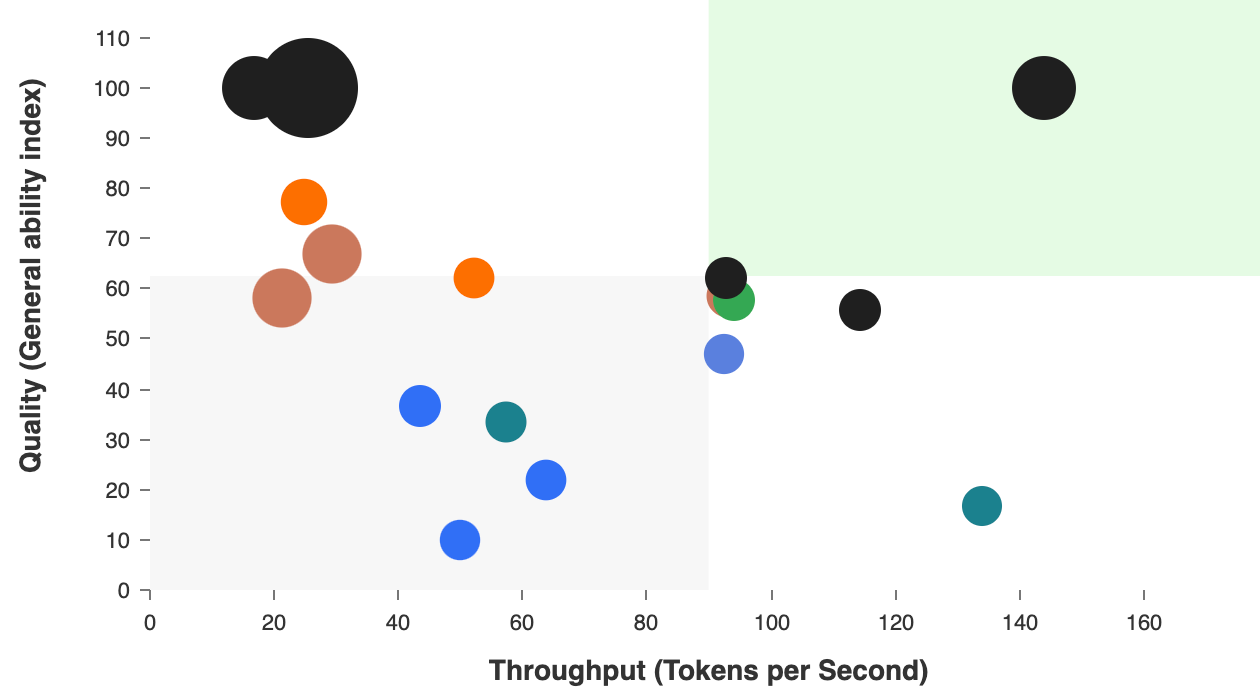

Deepseek R1 Distill Llama 8b Api Provider Performance Benchmarking Deepseek r1 distill llama 8b is a distilled large language model based on llama 3.1 8b instruct, using outputs from deepseek r1. the model combines advanced distillation techniques to achieve high performance across multiple benchmarks, including:. Deepseek deepseek r1 distill llama 8b by openrouter. input: — m, output: — m. 32k output tokens.

Deepseek R1 Distill Llama 70b Provider Status Openrouter Yes, deepseek r1 distill llama 8b has an api available. check the official documentation for integration details. how does deepseek r1 distill llama 8b perform on benchmarks? deepseek r1 distill llama 8b achieves: gpqa: 49.0%. Analysis of api providers for deepseek r1 distill llama 8b across performance metrics including latency (time to first token), output speed (output tokens per second), price and others. Sample code and api for r1 openrouter normalizes requests and responses across providers for you. To support the research community, we have open sourced deepseek r1 zero, deepseek r1, and six dense models distilled from deepseek r1 based on llama and qwen. deepseek r1 distill qwen 32b outperforms openai o1 mini across various benchmarks, achieving new state of the art results for dense models.

George Api Finetuned Deepseek R1 Distill Llama 8b Hugging Face Sample code and api for r1 openrouter normalizes requests and responses across providers for you. To support the research community, we have open sourced deepseek r1 zero, deepseek r1, and six dense models distilled from deepseek r1 based on llama and qwen. deepseek r1 distill qwen 32b outperforms openai o1 mini across various benchmarks, achieving new state of the art results for dense models. Providers for r1 0528 openrouter routes requests to the best providers that are able to handle your prompt size and parameters, with fallbacks to maximize uptime. By distilling knowledge from the larger deepseek r1 model, it provides state of the art performance with reduced computational requirements. this model is ready for both research and commercial use. for more details, visit the deepseek website. With open weights available for self hosting, r1 offers unprecedented flexibility for organizations requiring on premise deployment of reasoning ai. the model supports a 128k context window and integrates well with existing deepseek infrastructure. Luckily, we can access the model through their providers and clients due to it's open source nature. this article will guide you through the process of accessing deepseek's services through providers and clients.

Deepseek R1 Distill Llama 8b Download Guide Step By Step Providers for r1 0528 openrouter routes requests to the best providers that are able to handle your prompt size and parameters, with fallbacks to maximize uptime. By distilling knowledge from the larger deepseek r1 model, it provides state of the art performance with reduced computational requirements. this model is ready for both research and commercial use. for more details, visit the deepseek website. With open weights available for self hosting, r1 offers unprecedented flexibility for organizations requiring on premise deployment of reasoning ai. the model supports a 128k context window and integrates well with existing deepseek infrastructure. Luckily, we can access the model through their providers and clients due to it's open source nature. this article will guide you through the process of accessing deepseek's services through providers and clients.

Comments are closed.