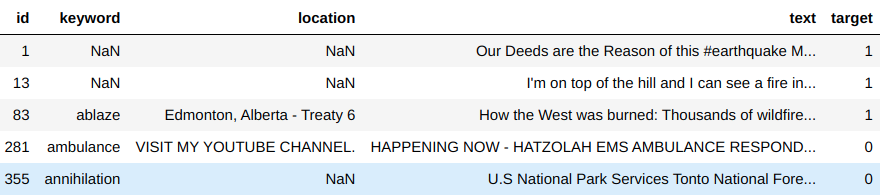

Bert Text Classification Tf Kaggle Comptetion Disaster Tweets Classification

Github Toygarr Classification Of Disaster Related Tweets Bidirectional encoder representations from transformers (bert) is a language model introduced in october 2018 by researchers at google. [1][2] it learns to represent text as a sequence of vectors using self supervised learning. it uses the encoder only transformer architecture. Bert is a bidirectional transformer pretrained on unlabeled text to predict masked tokens in a sentence and to predict whether one sentence follows another. the main idea is that by randomly masking some tokens, the model can train on text to the left and right, giving it a more thorough understanding.

Github Vikrantrajput7 Disaster Tweets Classification Unlike recent language representation models, bert is designed to pre train deep bidirectional representations from unlabeled text by jointly conditioning on both left and right context in all layers. Bert (bidirectional encoder representations from transformers) stands as an open source machine learning framework designed for the natural language processing (nlp). Bert is a model for natural language processing developed by google that learns bi directional representations of text to significantly improve contextual understanding of unlabeled text across many different tasks. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects.

Github Raklugrin01 Disaster Tweets Analysis And Classification Bert is a model for natural language processing developed by google that learns bi directional representations of text to significantly improve contextual understanding of unlabeled text across many different tasks. In the following, we’ll explore bert models from the ground up — understanding what they are, how they work, and most importantly, how to use them practically in your projects. The bert show typically airs weekday mornings on q99.7 in atlanta. for some, it's their drive time go to show during their morning commutes. as with most morning radio shows, fans develop a certain affinity for certain hosts, and since moe was with the show for a while, his absence was immediately felt. Bert is a deep learning language model designed to improve the efficiency of natural language processing (nlp) tasks. it is famous for its ability to consider context by analyzing the relationships between words in a sentence bidirectionally. This week, we open sourced a new technique for nlp pre training called b idirectional e ncoder r epresentations from t ransformers, or bert. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context from surrounding text.

Github Raklugrin01 Disaster Tweets Analysis And Classification The bert show typically airs weekday mornings on q99.7 in atlanta. for some, it's their drive time go to show during their morning commutes. as with most morning radio shows, fans develop a certain affinity for certain hosts, and since moe was with the show for a while, his absence was immediately felt. Bert is a deep learning language model designed to improve the efficiency of natural language processing (nlp) tasks. it is famous for its ability to consider context by analyzing the relationships between words in a sentence bidirectionally. This week, we open sourced a new technique for nlp pre training called b idirectional e ncoder r epresentations from t ransformers, or bert. Bert is an open source machine learning framework for natural language processing (nlp) that helps computers understand ambiguous language by using context from surrounding text.

Comments are closed.