A Deep Dive Into Transformers With Tensorflow And Keras Part 1

A Deep Dive Into Transformers With Tensorflow And Keras Part 1 In this tutorial, you will learn about the evolution of the attention mechanism that led to the seminal architecture of transformers. this lesson is the 1st in a 3 part series on nlp 104: to learn how the attention mechanism evolved into the transformer architecture, just keep reading. A step by step guide to constructing and training a custom gpt language model from the ground up using tensorflow and keras.

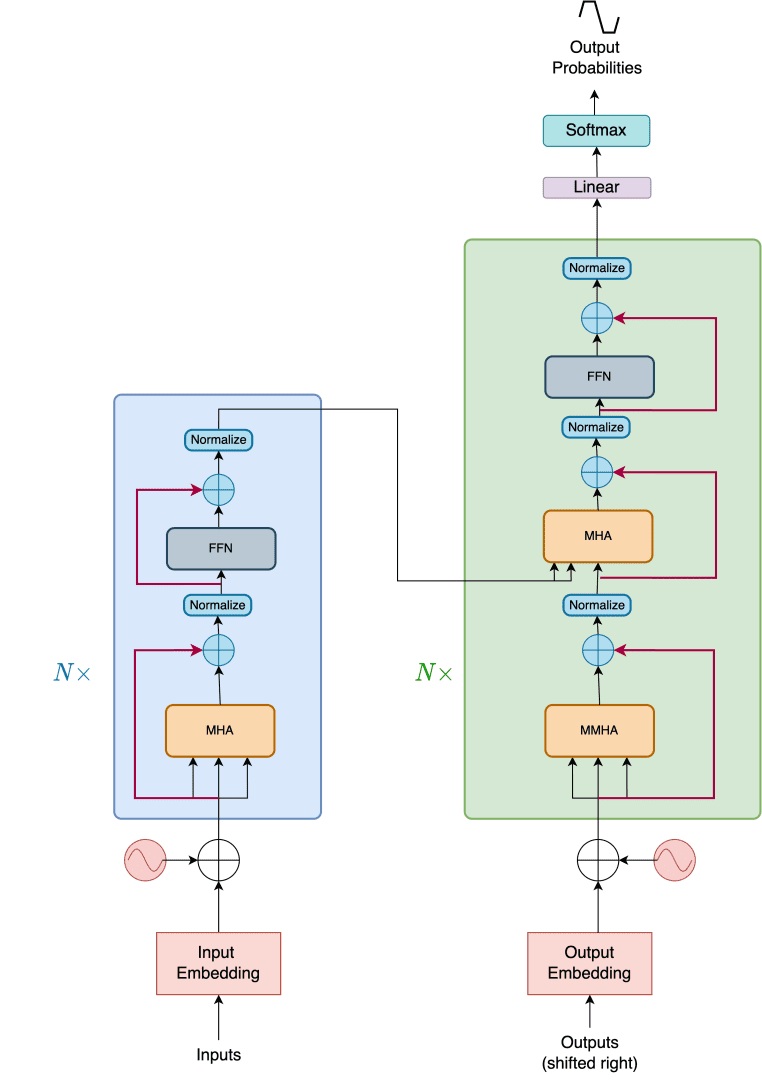

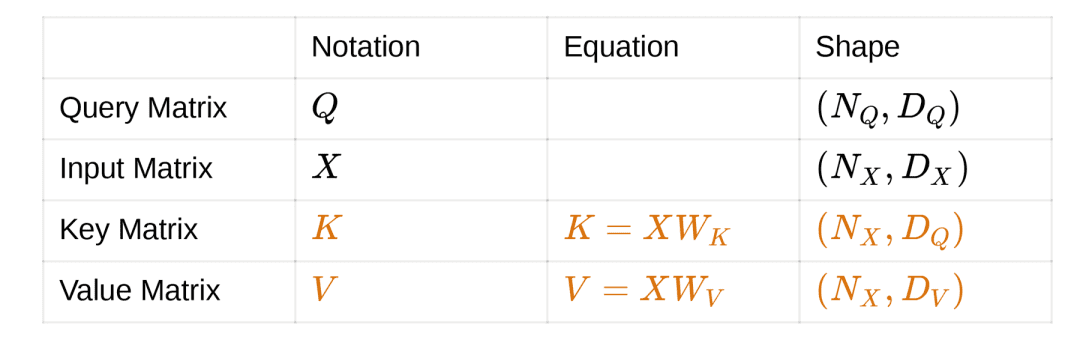

A Deep Dive Into Transformers With Tensorflow And Keras Part 1 This tutorial demonstrates how to create and train a sequence to sequence transformer model to translate portuguese into english. the transformer was originally proposed in "attention is all. Transformers are deep learning architectures designed for sequence to sequence tasks like language translation and text generation. they uses a self attention mechanism to effectively capture long range dependencies within input sequences. It covers the essential components of the transformer, including the self attention mechanism, the feedforward network, and the encoder decoder architecture. the implementation uses the keras api in tensorflow and demonstrates how to train the model on a toy dataset for machine translation. To get the most out of this tutorial, it helps if you know about the basics of text generation and attention mechanisms. a transformer is a sequence to sequence encoder decoder model similar to the model in the nmt with attention tutorial.

A Deep Dive Into Transformers With Tensorflow And Keras Part 1 It covers the essential components of the transformer, including the self attention mechanism, the feedforward network, and the encoder decoder architecture. the implementation uses the keras api in tensorflow and demonstrates how to train the model on a toy dataset for machine translation. To get the most out of this tutorial, it helps if you know about the basics of text generation and attention mechanisms. a transformer is a sequence to sequence encoder decoder model similar to the model in the nmt with attention tutorial. Our end goal remains to apply the complete model to natural language processing (nlp). in this tutorial, you will discover how to implement the transformer encoder…. Following this book to teach myself about the transformer architecture in depth. some excellent resources i've come across along the way: building transformer models with attention: implementation from scratch in tensorflow keras. A deep dive into transformers with tensorflow and keras: part 1 a tutorial on the evolution of the attention module into the transformer architecture. est. reading time: 21 minutes. In this article, we’ve explored the key components of transformers and provided a hands on code example using tensorflow and keras. as you dive deeper into the world of nlp, understanding transformers will prove to be a valuable asset, opening doors to cutting edge research and applications.

A Deep Dive Into Transformers With Tensorflow And Keras Part 1 Our end goal remains to apply the complete model to natural language processing (nlp). in this tutorial, you will discover how to implement the transformer encoder…. Following this book to teach myself about the transformer architecture in depth. some excellent resources i've come across along the way: building transformer models with attention: implementation from scratch in tensorflow keras. A deep dive into transformers with tensorflow and keras: part 1 a tutorial on the evolution of the attention module into the transformer architecture. est. reading time: 21 minutes. In this article, we’ve explored the key components of transformers and provided a hands on code example using tensorflow and keras. as you dive deeper into the world of nlp, understanding transformers will prove to be a valuable asset, opening doors to cutting edge research and applications.

Comments are closed.